MCP from First Principles to Production: Why JSON-RPC over stdio beat WebSockets + OpenAPI

Developers who have used at least one LLM API (Claude, GPT, Gemini) and want to build production-grade integrations using MCP. Familiar with REST APIs, basic auth flows, and terminal-driven workflows.

- Explain WHY MCP chose JSON-RPC over stdio instead of WebSockets or a REST+OpenAPI approach — and when that choice matters for your own architecture

- Read and write a complete MCP server from scratch, handling the full JSON-RPC lifecycle over stdio and HTTP streaming

- Choose the right MCP primitive (Tool, Resource, or Prompt) for any integration requirement with a written decision rule

- Wire up OAuth 2.1 + DPoP auth on an MCP server so it rejects unauthorized requests and produces a full audit trail

- Deploy an MCP server behind a gateway to a team of 1,000 users with structured logs, RBAC, and zero-downtime rollout

Why MCP exists — the design problem it actually solves

▶ Listen (audio)

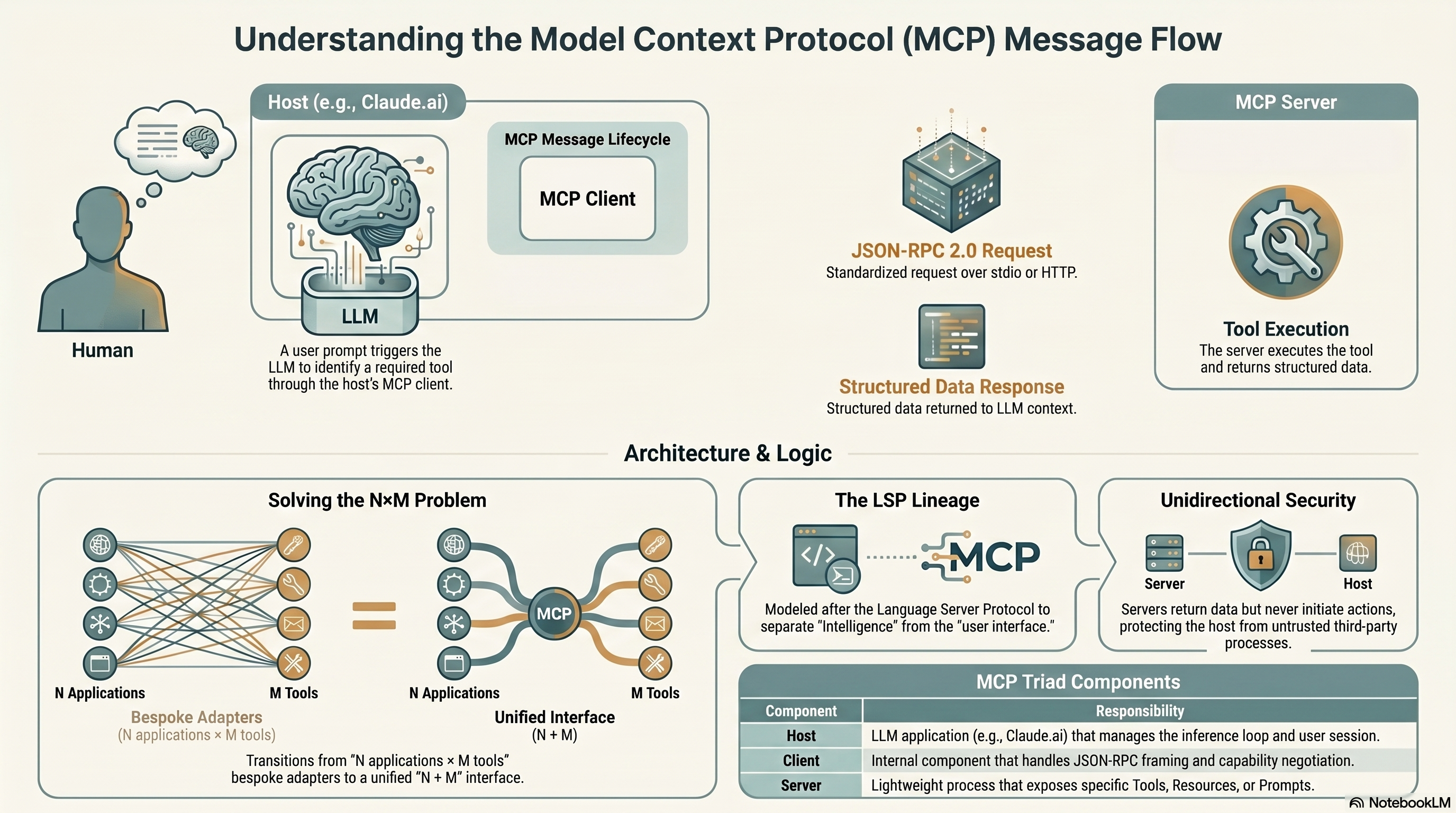

The Model Context Protocol (MCP) is an open, vendor-neutral protocol introduced by Anthropic on 25 November 2024 for standardising how AI applications connect to external data sources and tools through a JSON-RPC 2.0 wire format over stdio or HTTP transports.[1] By April 2026, the protocol had attracted integrations from multiple AI development platforms, with the official 2026 roadmap charting its trajectory for remote-server authentication and gateway discovery.[2]

Most tutorials start with a hello-world tool call and a five-line SDK import. That's fine for demos. It doesn't explain why the protocol is shaped the way it is, and without that, every 3 AM debugging session feels like archaeology. This chapter answers the why: what design constraint forced a specific set of decisions, what alternatives were rejected and for which precise reasons, and what problems MCP explicitly does not attempt to solve. Once that picture is clear, the rest of the specification reads like a logical consequence rather than a set of arbitrary choices.

Key facts

- Announced: 25 November 2024 by Anthropic; specification published at

spec.modelcontextprotocol.io.[1] - Wire protocol: JSON-RPC 2.0 (newline-delimited) over stdio or HTTP+SSE (Streamable HTTP); not REST, not WebSocket, not gRPC.[1]

- Three primitives: Tools (model-initiated side-effects), Resources (app-controlled read-only data), Prompts (user-initiated templates).[1]

- LSP lineage: Architecture is explicitly modelled on the Language Server Protocol, which solved the identical N×M problem for editors in 2016.[3]

- Auth trajectory: The 2026 roadmap targets OAuth 2.1 + DPoP token binding (SEP-1932, an active proposal) for remote-server authentication, plus gateway discovery via

.well-knownmetadata.[2] - Adoption baseline (April 2026): Claude.ai ships MCP natively; multiple AI development platforms and IDEs have announced MCP-compatible integrations.[1]

- Governance: Specification is Apache 2.0. Anthropic chairs the working group but does not hold exclusive change authority.

The N×M integration problem

Before MCP existed, every team building an LLM application faced the same structural trap.

Suppose you are building an AI coding assistant. Your users need the model to read GitHub repos, query Jira tickets, pull documentation from Confluence, run tests in CI, and check Datadog metrics. That's five integrations. Each integration requires: understanding the third-party API, writing an adapter that maps the API's response shape to whatever JSON structure your model prefers, handling auth (likely OAuth for each service), dealing with pagination, and managing error recovery. Each adapter is bespoke code that belongs to your application and nobody else's.

Now suppose a second team is building an AI support agent. They need the same five integrations plus Salesforce and Zendesk. They repeat the work. A third team building an AI DevOps assistant needs most of the same integrations again. The result is N applications × M tools = N×M integrations, each implemented slightly differently, each with its own bugs, its own auth model, and its own failure modes.

This isn't a hypothetical. In the period leading up to MCP's launch, teams building on Claude and other LLMs had each written bespoke integration adapters for every tool they needed — a landscape of redundant, incompatible implementations, each solving the same underlying problem in isolation.[1] Every major LLM provider observed the same fragmentation pattern. The ecosystem was splitting along exactly the axis that makes tooling ecosystems fail: each participant solving the same local problem independently, without a shared protocol layer.

The textbook fix for N×M proliferation is to insert a standard: instead of N×M direct connections, you get N+M relationships to a common interface. That is exactly what MCP does for LLM tool integrations.

Three alternatives that didn't survive contact with reality

The N×M framing explains that a protocol was needed. It doesn't explain why MCP looks the way it does. For that, you need to understand what was rejected and why.

Alternative 1: Custom REST adapters (the status quo before MCP)

The simplest approach is no approach: each LLM application team writes its own integration layer, calling third-party REST APIs directly and shaping the response JSON however suits the model's context window. Most of the bespoke adapters that proliferated before MCP followed this pattern.

The specific failure mode: REST APIs are designed for human-operated software clients with stable lifecycles. They assume: a long-lived HTTP connection, a client that can parse HTML error pages or inconsistent response schemas, retry logic calibrated to human workflows, and auth tokens whose expiry is handled by a logged-in user session. LLM tool calls have none of these properties. They're ephemeral (one inference pass), unbounded in concurrency (the model can call tools in parallel), and entirely programmatic — there's no human in the loop to re-authenticate when a token expires mid-task. Custom REST adapters fail silently under these conditions, and the failure only surfaces as a degraded model response that the user may not recognise as a tool failure.

The deeper problem: no REST adapter is reusable across applications. If two teams are both querying the same GitHub API, they ship two adapters. Neither can benefit from the other's bug fixes.

Alternative 2: WebSocket hub

A more architecturally sophisticated approach: build a central hub that all LLM applications connect to via WebSocket. The hub speaks to each third-party service and exposes a unified API to the models. This is roughly how several enterprise "AI middleware" products positioned themselves in 2023–2024.

The specific failure mode: WebSocket connections are stateful. The hub must maintain a live socket per LLM application session, track which model is mid-task, and route responses back to the right session. Under load, this creates a complex multiplexed-session management problem that grows with the number of concurrent LLM calls. More critically: the hub becomes a single point of failure. If it goes down — or even experiences elevated latency — every LLM application it serves degrades simultaneously.

There is a subtler problem for local development. WebSocket hubs require network reachability. An MCP server for a local tool (like a file system reader or a local database) runs as a child process on the developer's machine. A WebSocket hub requires that local process to expose a public network address, which is either a security hole or an operational headache. stdio sidesteps this entirely: the MCP server is a child process of the host application, communicating over a Unix pipe. No network, no auth surface, trivially restartable.

Alternative 3: OpenAPI spec passthrough

A third approach: standardise the description format for tools rather than the transport. Publish an OpenAPI 3.1 spec for each service, have the LLM read the spec, and generate API calls directly. Some LLM providers experimented with this in 2023.

The specific failure mode: OpenAPI describes what an API does, not how an LLM should call it. The semantics of "what parameters to pass and when" are not representable in OpenAPI's schema layer — they require the kind of natural-language description that MCP's Tool definition carries in its description field. OpenAPI also provides no mechanism for streaming partial results back to the model (which matters enormously for long-running tool calls), no capability negotiation (so the model doesn't know which version of the tool is available), and no structured error typing that the model can reason about.

More critically: OpenAPI passthrough gives the model direct access to an API with no mediation layer. If the model makes a malformed call — which LLMs do, especially with complex parameter schemas — the error comes back as a raw HTTP 422 or 500, which the model must parse without context. MCP's typed error responses (error.code, error.message, structured data) are designed precisely so that the model has enough signal to retry or escalate without human intervention.[5]

I want to understand the N×M problem concretely. Without any standard protocol: if I have 4 LLM applications (a coding assistant, a support agent, a data analyst tool, and a DevOps helper) and each ne…

Show expected output

Without a standard protocol, you need 4 × 6 = 24 integration adapters — each application writes its own connector to each tool. With a standard protocol like MCP: - Each of the 6 tools publishes 1 MCP server (6 total) - Each of the 4 applications implements 1 MCP client (4 total) - Total integration surface: 6 + 4 = 10 pieces, not 24 The trade-offs: (1) Protocol overhead — every call goes through JSON-RPC framing and capability negotiation. (2) Least-common-denominator risk — the protocol must be general enough for all tools. (3) Versioning coupling — spec changes require both clients and servers to update. (4) Discovery bootstrapping — you need a way for applications to find servers, which the 2026 roadmap solves with .well-known gateway metadata. The trade-off is worth it once N and M are both greater than ~3.

The LSP lineage: a protocol that solved this before

The Model Context Protocol did not invent its own design. It borrowed from a protocol that already solved the identical N×M problem in a different domain: the Language Server Protocol (LSP), introduced by Microsoft in 2016.[3]

Before LSP, every code editor (VS Code, Vim, Emacs, Eclipse, IntelliJ) had to implement language support for every programming language — syntax highlighting, go-to-definition, autocomplete, rename refactor. The result was the same N×M explosion: M editors × N languages = M×N implementations, each with different quality and feature parity.

LSP solved it with a single insight: separate the language intelligence from the editor UI. A language server runs as a local process and speaks a standard JSON-RPC protocol. Any editor that implements the LSP client can talk to any language server. Today, the Python LSP server (pylsp) works identically in VS Code, Neovim, Emacs, and Helix. The editor teams wrote one client; the language teams wrote one server.

MCP borrows three specific architectural choices from LSP:

1. JSON-RPC 2.0 over stdio for local servers. LSP uses JSON-RPC over stdio for local servers. MCP uses exactly the same wire format for the same reason: it's a self-contained framing protocol, easy to parse, with no external dependencies. A process that reads from stdin and writes to stdout is the simplest possible IPC primitive — no sockets, no shared memory, no OS-specific APIs.[1][4]

2. Capability negotiation at handshake time. LSP's initialize / initialized handshake lets the client and server advertise which features each supports before any work begins. MCP's initialize lifecycle follows the same pattern: the client sends its capabilities, the server responds with its own, and both parties agree on the protocol version. This means a new client can talk to an old server (or vice versa) without crashing — they negotiate down to the intersection of their capabilities.[1]

3. Stateful session model. LSP maintains a session per open project. MCP maintains a session per connected client. Both sessions are terminated when the process exits or the connection closes. This is in contrast to REST APIs, which are stateless by design. The stateful model allows MCP servers to maintain context between calls (e.g., an open database connection, a cached authentication token) without re-establishing it on every request.

The explicit LSP comparison appears in the original MCP announcement from Anthropic and in the specification's architecture documentation.[1]

Compare LSP's initialize/initialized handshake with MCP's initialize lifecycle. Walk me through exactly what fields are exchanged in MCP's initialize request and response, and explain what each side l…

Show expected output

MCP's initialize handshake (spec §3.1):

Client → Server (initialize request):

- protocolVersion: the spec version the client supports (e.g. '2024-11-05')

- capabilities: object advertising what the client can handle (roots, sampling)

- clientInfo: { name, version } for diagnostics

Server → Client (initialize response):

- protocolVersion: the version the server will use (must be ≤ client's)

- capabilities: object advertising what the server exposes (tools, resources, prompts, logging)

- serverInfo: { name, version } for diagnostics

Client → Server (notifications/initialized): confirms the client is ready.

What each side learns that a REST /health check cannot tell you:

1. Protocol version compatibility — the client knows immediately whether the server speaks a version it understands. A /health endpoint returns 200 OK regardless of spec version.

2. Capability surface — the client learns exactly which primitives (tools/resources/prompts) the server supports before making a single call. No trial-and-error.

3. Negotiated version floor — both sides agree on the lowest common version, so neither sends fields the other can't parse.

4. Server identity — serverInfo lets the host log which implementation is running, useful for debugging multi-server setups.

A REST /health check tells you only that the server is alive. MCP's handshake tells you what it can do and whether you can talk to it.The host / client / server triad

MCP defines a precise three-way topology that almost every tutorial glosses over. Understanding it prevents an entire class of architectural mistakes.

Host — The LLM application. Claude.ai is a host. Cursor is a host. Your custom agent is a host. The host is responsible for: starting and stopping MCP server processes, managing user sessions, holding the LLM inference loop, and deciding which tool results to include in the model's context.

Client — A component inside the host that manages a single MCP server connection. A host can maintain multiple clients simultaneously (one per MCP server). The client handles: JSON-RPC framing, capability negotiation, request multiplexing, and lifecycle management for one server. It is not user-facing; it's plumbing.

Server — The MCP server itself. A server exposes some combination of Tools, Resources, and Prompts, and it serves exactly one domain: a GitHub MCP server knows about repos and files; a Postgres MCP server knows about tables and queries. Servers are intentionally narrow.

The key constraint: the server never calls the host, and it never calls other servers. Information flows in one direction: the client calls the server, the server returns results, the client passes them to the host, the host injects them into the model context. This unidirectional constraint is what makes MCP servers safe to run as untrusted third-party processes: a malicious server can return garbage, but it cannot initiate actions against the host or against other connected services.

<Callout type="warning"> The unidirectional constraint has teeth. If you design an MCP server that tries to call back into the host (e.g., to trigger another tool call), you have broken the security model. The server has no channel for this — and any workaround (e.g., embedding a callback URL in a tool result) should be treated as a red flag in code review. See courses/mcp-from-first-principles-to-production/02-json-rpc-wire-protocol for how the JSON-RPC framing enforces this at the wire level. </Callout>

<KnowledgeCheck questions={[ { question: "An MCP server embeds a callback URL in a tool result, and the host application uses it to trigger a call on a different MCP server. Why is this a security concern in the MCP architecture?", answers: [ "Callback URLs are not valid JSON-RPC response fields", "The server has bypassed the unidirectional constraint — it is covertly initiating an action chain the host did not authorise, outside the normal audit trail", "HTTP callbacks introduce latency incompatible with stdio transport", "MCP servers are not permitted to return string fields in tool results" ], correct: 1, explanation: "The MCP security model depends on servers only returning data — never initiating actions. When a server embeds a callback URL to trigger further tool calls, it is covertly influencing host behaviour, bypassing the access controls and audit trail the host maintains. This is a common prompt-injection vector in agentic systems. MCP's unidirectional architecture makes server intent auditable by restricting servers to returning structured data only." } ]} />

What MCP deliberately does NOT solve

This is the contrarian angle that almost every MCP post omits. MCP is not universal agent middleware. It is a narrow protocol for one specific job — standardised context injection into LLM inference — and its narrowness is what makes it deployable. Every problem MCP does not solve is a problem it deliberately deferred to a higher layer.

Agent orchestration. There is no flow control in MCP, no mechanism for a server to direct the model to call another tool, no branching logic. Orchestration (deciding which tool to call, in what order, with what retry logic) is the host's job. This is intentional: if the protocol encoded orchestration, every orchestration model (ReAct, plan-and-execute, tree-of-thought) would require a protocol extension. By leaving orchestration out, MCP can be used with any orchestration model without modification.[2]

Multi-agent coordination. Two MCP-enabled agents cannot coordinate through MCP itself. They would need a separate channel — a message queue, a shared database, or an orchestrator agent that calls both via its own MCP clients. The 2026 roadmap explicitly names multi-agent coordination as a future consideration, not a current feature.[2]

Session memory and persistence. When an MCP server process exits, its state is gone. Persistent memory (conversation history, user preferences, cross-session context) is the host's responsibility. Servers that want to persist state must use an external database and manage it themselves.

Model routing. Which model gets called, at what temperature, with what context window budget — none of that is MCP's concern. MCP is below the model layer; it's the mechanism by which context reaches a model, not the mechanism by which a model is selected or invoked.

Billing and rate limiting at the server level. A raw MCP server has no concept of who is calling it or how many times. That's what the gateway layer (Chapter 5) adds — RBAC, per-user rate limits, and audit trails. Running MCP servers without a gateway on a multi-user system is like running a database without connection pooling or access control.

The deliberate narrowness is the design. A protocol that tried to solve all five of these problems would be so complex that no two implementations would be compatible. MCP's power comes from what it excludes.

I'm building an AI coding assistant that needs to: (1) read files from a GitHub repo, (2) run tests in CI and get results, (3) remember which files the user worked on across sessions, (4) decide wheth…

Show expected output

1. Read files from GitHub repo → MCP's job. A GitHub MCP server with a read_file Resource or Tool handles this directly. 2. Run tests in CI and get results → MCP's job. A CI MCP server with a run_tests Tool handles this. The Tool returns structured results the model can reason over. 3. Remember which files the user worked on across sessions → NOT MCP's job — the host's responsibility. MCP servers are stateless between sessions. Persistent memory belongs to your application layer: a database, vector store, or session management system the host controls. 4. Decide whether to call GitHub or CI first → NOT MCP's job — orchestration. The model (guided by your system prompt and tool descriptions) decides call order. MCP just executes whichever call the model makes. Deterministic ordering is enforced by your host's orchestration logic. 5. Limit CI calls per user per hour → NOT MCP's job — the gateway/infrastructure layer. A raw MCP server has no concept of users or rate limits. You need an MCP gateway (Chapter 5) with RBAC and rate-limiting policies, or a reverse proxy that enforces quotas before requests reach the server.

A minimal MCP server: hello world in ~38 lines

The best way to make the architecture concrete is to read a complete, working MCP server. The following Python implementation is stripped to the minimum that satisfies the MCP specification's initialize handshake and handles a single tool call over stdio.[1]

```python #!/usr/bin/env python3 """Minimal MCP server: exposes one tool (echo) over stdio transport.""" import json import sys

def send(msg: dict) -> None: # Never use print() here — it writes to stdout and corrupts the stdio channel sys.stdout.write(json.dumps(msg) + "\n") sys.stdout.flush()

def handle(msg: dict) -> None: method = msg.get("method") id_ = msg.get("id")

if method == "initialize": send({ "jsonrpc": "2.0", "id": id_, "result": { "protocolVersion": "2024-11-05", "capabilities": {"tools": {}}, "serverInfo": {"name": "hello-mcp", "version": "0.1.0"} } })

elif method == "tools/list": send({ "jsonrpc": "2.0", "id": id_, "result": {"tools": [{ "name": "echo", "description": "Returns the input string unchanged.", "inputSchema": { "type": "object", "properties": {"message": {"type": "string"}}, "required": ["message"] } }]} })

elif method == "tools/call": args = msg.get("params", {}).get("arguments", {}) send({ "jsonrpc": "2.0", "id": id_, "result": {"content": [{"type": "text", "text": args.get("message", "")}]} })

elif method == "notifications/initialized": pass # Notifications receive no response

else: # JSON-RPC 2.0 §5: requests carrying an id MUST receive a response; # only notifications (no id field) may be silently dropped. if id_ is not None: send({ "jsonrpc": "2.0", "id": id_, "error": {"code": -32601, "message": "Method not found"} })

for line in sys.stdin: line = line.strip() if line: handle(json.loads(line)) ```

Run it and drive it manually to see the full lifecycle:

``bash

python3 hello_mcp.py << 'EOF'

{"jsonrpc":"2.0","id":1,"method":"initialize","params":{"protocolVersion":"2024-11-05","capabilities":{},"clientInfo":{"name":"test-client","version":"0.1"}}}

{"jsonrpc":"2.0","method":"notifications/initialized"}

{"jsonrpc":"2.0","id":2,"method":"tools/list","params":{}}

{"jsonrpc":"2.0","id":3,"method":"tools/call","params":{"name":"echo","arguments":{"message":"hello, MCP"}}}

EOF

``

Expected output:

``json

{"jsonrpc": "2.0", "id": 1, "result": {"protocolVersion": "2024-11-05", "capabilities": {"tools": {}}, "serverInfo": {"name": "hello-mcp", "version": "0.1.0"}}}

{"jsonrpc": "2.0", "id": 2, "result": {"tools": [{"name": "echo", "description": "Returns the input string unchanged.", "inputSchema": {"type": "object", "properties": {"message": {"type": "string"}}, "required": ["message"]}}]}}

{"jsonrpc": "2.0", "id": 3, "result": {"content": [{"type": "text", "text": "hello, MCP"}]}}

``

Every field maps directly to a section of the MCP specification.[1] The protocolVersion in the initialize response is the spec version the server implements — clients use this to decide whether to proceed or reject the connection. The capabilities object is the negotiation surface: if your server doesn't include {"resources": {}} in capabilities, the client will not attempt resources/list. The content array in a tool response is typed — each item has a type field (text, image, resource) that tells the host how to render the result.

Notice what is not in this server: no authentication, no rate limiting, no session management, no orchestration. Those are host and gateway concerns. This server's only job is to expose the echo tool correctly.

I have the minimal echo MCP server from this chapter. Extend it to add a second tool called 'reverse' that takes a 'text' string parameter and returns the string reversed. Show: (1) the updated tools/…

Show expected output

(1) Updated tools/list result body:

{

\<Callout type="hot"> Why stdio, not HTTP, for local servers? This server reads from stdin and writes to stdout — there is no network socket. That means: zero firewall configuration, zero network authentication surface, trivial restart (kill and respawn the process), and clean process isolation (the server dies when its parent dies). The stdio transport is not a dev-environment shortcut. It is the production transport for any MCP server that runs on the same machine as the host. Chapter 2 covers when to switch to HTTP+SSE (Streamable HTTP) for remote servers — and what you give up when you do. </Callout>

Knowledge checks

<KnowledgeCheck questions={[ { question: "In the MCP architecture, which component decides which tool to call next — the host, the client, or the server?", answers: [ "The MCP server, based on its tool metadata", "The MCP client, based on capability negotiation", "The host (the LLM application), based on model output", "The MCP specification mandates a round-robin call order" ], correct: 2, explanation: "The host runs the LLM inference loop and acts on model output. The model (guided by tool descriptions) suggests which tool to call; the host executes that call via its MCP client. The server has no visibility into this decision." }, { question: "Which of the following is NOT a problem that MCP was designed to solve?", answers: [ "Standardising how LLM applications call external tools", "Eliminating N×M custom integration adapters", "Persisting conversation history across sessions", "Defining a capability negotiation handshake between client and server" ], correct: 2, explanation: "Session persistence is explicitly out of scope for MCP. The protocol is stateless between sessions — a server process that exits loses its state. Persistent memory is the host application's responsibility." }, { question: "The MCP specification uses JSON-RPC 2.0 over stdio for local servers. Which protocol directly inspired this design choice?", answers: [ "GraphQL subscriptions", "gRPC bidirectional streaming", "The Language Server Protocol (LSP)", "WebSocket over TLS" ], correct: 2, explanation: "MCP is explicitly modelled on LSP, which uses JSON-RPC over stdio to solve the identical N×M problem for editors and language tooling. The architecture documentation in the MCP specification cites this lineage directly." } ]} />

<KnowledgeCheck questions={[ { question: "A colleague proposes building a WebSocket hub as universal middleware for all LLM tool integrations. What is the specific architectural failure mode of this approach?", answers: [ "WebSocket is too slow for LLM response times", "The hub becomes a stateful single point of failure, and local-process tools cannot be reached without a public network address", "WebSocket is not supported by JSON-RPC 2.0", "LLMs cannot parse WebSocket frames natively" ], correct: 1, explanation: "Stateful session management at scale and the requirement for network reachability are the two structural problems. Local tools have no natural network address — stdio process spawning sidesteps this completely." } ]} />

Hands-on exercise: map your own integrations onto the MCP model

Pick three integrations you have built or maintain — REST API calls your application makes, database queries, file system reads, third-party SDKs. For each, answer:

- Host / Client / Server assignment: If this were an MCP server, which component would own the domain logic? What would the server's

namebe?

- Primitive classification: Is this primarily a Tool (model-initiated, side-effects acceptable), a Resource (app-controlled, read-only context), or a Prompt (user-triggered template)?

- What MCP would NOT handle: Identify one concern that MCP leaves to your application layer — auth, rate limiting, caching, session state — and name which layer owns it.

Write three one-paragraph descriptions, one per integration, structured as: "This is an MCP [Tool/Resource/Prompt] exposed by a server named [X]. The host is [Y]. MCP handles [specific responsibility]. The [auth/rate-limit/etc.] concern belongs to [layer/component] because [reason]."

Success criteria: If you can write all three paragraphs without hedging on the primitive classification, you've internalised the host/client/server separation well enough to proceed to the next chapter.

What's next

Chapter 1 answered the why. Chapter 2 answers the how — down to the byte level.

In courses/mcp-from-first-principles-to-production/02-json-rpc-wire-protocol you will dissect the JSON-RPC 2.0 envelope frame by frame, implement the full initialize → capabilities → request → response lifecycle by hand (no SDK), and build the 60-line Python server that handles tools/list and tools/call over raw stdin/stdout. You'll also learn when stdio transport breaks down and why Streamable HTTP (HTTP+SSE) is the right choice for remote servers — including what you give up when you leave stdio behind.

If you want context on where the protocol is heading before diving into wire-level details, blogs · mcp-2026-roadmap-explained covers the OAuth 2.1 + DPoP trajectory and the gateway discovery work planned for the second half of 2026.

References

[1]: Model Context Protocol Specification — https://spec.modelcontextprotocol.io/ · retrieved 2026-04-30 [2]: MCP 2026 Roadmap (Official Blog) — https://blog.modelcontextprotocol.io/posts/2026-mcp-roadmap/ · retrieved 2026-04-30 [3]: Language Server Protocol Specification 3.17 — https://microsoft.github.io/language-server-protocol/specifications/lsp/3.17/specification/ · retrieved 2026-04-30 [4]: JSON-RPC 2.0 Specification — https://www.jsonrpc.org/specification · retrieved 2026-04-30 [5]: MCP Specification GitHub Issues — https://github.com/modelcontextprotocol/specification/issues · retrieved 2026-04-30

▾ More learning resources for this chapter (slides, deck preview)

References

JSON-RPC over stdio — the wire protocol explained

> Prerequisites: Chapter 1 (Why MCP exists). You should be able to sketch the host/client/server triad and articulate the N×M problem MCP solves. > > Time: 45 minutes > > What you'll be able to do: By the end of this chapter, you can read a raw MCP message exchange at the byte level, explain every design choice in the wire format, and write a working MCP server from scratch without an SDK. This hands-on fluency is what separates developers who can ship MCP integrations from developers who can merely configure them.

Why the wire format matters

Every MCP SDK — the Python mcp package, the TypeScript @modelcontextprotocol/sdk, the Rust crate — is an abstraction layer over the same wire protocol. When something goes wrong in production, the abstraction disappears and you're reading raw JSON in a log file or a debugger. If you don't know what that JSON should look like, you can't diagnose the problem.

More importantly: once you understand the wire format, the SDK stops being magic. Every SDK call maps to one or two JSON messages. Once you can see those messages, you can reason about performance (how many round-trips does a tool call require?), error handling (what does a structured error look like vs. a malformed request?), and security (what information is in the request that an attacker could exploit?).

This chapter builds that fluency from scratch.

JSON-RPC 2.0: the message envelope

MCP uses [[JSON-RPC 2.0]][1] as its message format. This is not an implementation detail — it's a deliberate choice with specific consequences. Let's understand it before we look at MCP-specific message types.

JSON-RPC 2.0 defines four message shapes:

Request (client → server, or server → client)

``json

{

"jsonrpc": "2.0",

"id": 1,

"method": "tools/call",

"params": {

"name": "search_github",

"arguments": { "query": "MCP protocol", "limit": 10 }

}

}

``

The id field is critical: it's how the response is correlated back to this specific request. In a world where multiple requests can be in-flight simultaneously (the client doesn't wait for one response before sending the next), the id lets both sides match requests to their responses. The id can be a number or a string; MCP conventionally uses integers.

Response (server → client, or client → server)

``json

{

"jsonrpc": "2.0",

"id": 1,

"result": {

"content": [

{ "type": "text", "text": "Found 47 results for 'MCP protocol'" }

],

"isError": false

}

}

``

Or on error:

``json

{

"jsonrpc": "2.0",

"id": 1,

"error": {

"code": -32602,

"message": "Invalid params",

"data": { "field": "limit", "issue": "must be between 1 and 100" }

}

}

``

The error and result fields are mutually exclusive. A valid JSON-RPC response has exactly one of them.

Notification (either direction, no response expected)

``json

{

"jsonrpc": "2.0",

"method": "notifications/tools/list_changed",

"params": {}

}

``

Notice: no `id` field. A notification is a one-way message. The sender never expects a response. This is how MCP servers push events to clients (tool list changes, resource updates, progress on long-running operations) without the client polling.

Batch (array of requests/notifications)

``json

[

{ "jsonrpc": "2.0", "id": 1, "method": "tools/list", "params": {} },

{ "jsonrpc": "2.0", "method": "notifications/initialized", "params": {} }

]

``

MCP supports batch messages but rarely uses them in practice. Most SDK implementations send messages individually.

<KnowledgeCheck

question="Which JSON-RPC field is ABSENT in a notification but REQUIRED in a request?"

options={[

"jsonrpc",

"method",

"id",

"params"

]}

correctIdx={2}

explanation="A notification has no id field because no response is expected. The id in a request is what allows the receiver to correlate the response back to the specific request. Notifications are one-way messages — the sender never expects a reply, so there is nothing to correlate."

/>

The stdio transport: why a pipe beats a socket

The technical choice

For local MCP servers — servers running on the same machine as the host — the MCP spec defines [[MCP stdio transport|stdio transport]]: the host launches the server as a subprocess and communicates via stdin (host → server) and stdout (server → host). Each message is a JSON object terminated by a single newline character (\n).[2]

This is newline-delimited JSON, also called NDJSON or JSON Lines. The framing rule is brutally simple: read until \n, parse what you got as JSON, process it.

``` [host writes to server stdin] {"jsonrpc":"2.0","id":1,"method":"initialize","params":{"protocolVersion":"2025-03-26","capabilities":{},"clientInfo":{"name":"claude-desktop","version":"1.0"}}}\n

[server writes to host stdout] {"jsonrpc":"2.0","id":1,"result":{"protocolVersion":"2025-03-26","capabilities":{"tools":{},"resources":{}},"serverInfo":{"name":"github-mcp","version":"0.1.0"}}}\n

[host writes to server stdin — client signals readiness, no response expected] {"jsonrpc":"2.0","method":"notifications/initialized","params":{}}\n ```

Why not WebSockets?

WebSockets provide a persistent bidirectional connection — sounds like an obvious choice. Here's what you'd actually be choosing:

- Network stack dependency: WebSocket connections require a port, a network interface, and TLS for anything beyond localhost. Running a local MCP server on WebSockets means opening a port on the host machine, managing its lifecycle, ensuring it's not accessible from outside. This is solvable but it's operational overhead that stdio avoids entirely.

- Complex lifecycle management: A WebSocket server is a long-running process. The host needs to know where it's listening (port, hostname), how to restart it if it crashes, and how to clean up when the host exits. With stdio, the lifecycle is implicit: the host forks a subprocess, and the subprocess dies when the host closes its end of the pipe. No cleanup logic required.

- Session multiplexing complexity: WebSocket servers often need to handle multiple concurrent connections. Stdio is inherently single-connection-per-process. For MCP's use case (one client per server connection), this simplicity is a feature.

Why not gRPC?

gRPC is mature, fast, and has excellent schema tooling via Protocol Buffers. The failure modes:

- Protobuf schema requirement: gRPC requires a

.protoschema definition. Every new tool or resource change requires a schema update and re-compilation. MCP's JSON Schema approach allows runtime schema changes (a server can add new tools without recompiling anything). - Binary format opacity: A gRPC message is not human-readable. Debugging a local MCP server by reading its stdio output is trivial with JSON. With Protobuf, you need a decoder.

- Tooling weight: gRPC requires a code generation step, language-specific runtimes, and for web/browser environments, special proxies (grpc-web). JSON-RPC needs only a JSON parser.

Why not REST?

REST over HTTP is request-response only. MCP needs bidirectionality: the server must be able to send unsolicited notifications to the client (tool list changes, resource updates, progress events). With REST, you'd need polling (inefficient, adds latency) or webhooks (requires the client to expose an HTTP server, which is complex for desktop apps). JSON-RPC over stdio gets bidirectionality for free: either side can write to its output at any time.

The initialize handshake: step by step

Every MCP session begins with a three-message handshake[4]. Understanding it prevents a surprising class of bugs where a server works in isolation but fails when connected to a real host.

Message 1: initialize (client → server)

``json

{

"jsonrpc": "2.0",

"id": 0,

"method": "initialize",

"params": {

"protocolVersion": "2025-03-26",

"capabilities": {

"roots": { "listChanged": true },

"sampling": {}

},

"clientInfo": {

"name": "claude-desktop",

"version": "1.2.0"

}

}

}

``

The client declares:

- protocolVersion — the version it wants to speak. Servers should accept any version they support; the spec doesn't mandate that servers reject older versions.

- capabilities — what the client can do. roots.listChanged means the client supports notifications when the root (workspace) changes. sampling means the client supports the server requesting LLM completions via the host.

- clientInfo — for logging and diagnostics.

Message 2: initialize response (server → client)

``json

{

"jsonrpc": "2.0",

"id": 0,

"result": {

"protocolVersion": "2025-03-26",

"capabilities": {

"tools": { "listChanged": true },

"resources": { "subscribe": true, "listChanged": true },

"prompts": { "listChanged": true },

"logging": {}

},

"serverInfo": {

"name": "github-mcp",

"version": "0.2.1"

}

}

}

``

The server declares its capabilities:

- tools.listChanged — the server will send notifications/tools/list_changed when its tool set changes.

- resources.subscribe — clients can subscribe to resource updates.

- logging — the server supports the logging/setLevel request.

Capabilities not declared here are not supported. If the client tries to use an undeclared capability, the server may return an error or silently ignore the request.

Message 3: notifications/initialized (client → server, notification)

``json

{

"jsonrpc": "2.0",

"method": "notifications/initialized",

"params": {}

}

``

This is the client saying "I've processed your initialize response and I'm ready." No id, no response expected. After this, the session is live and the client can send any supported request.

The entire handshake is synchronous: no other messages can be sent until notifications/initialized is dispatched. This is important for implementations that try to pre-load tool lists before the handshake completes — they'll get a protocol error.

<KnowledgeCheck

question="After receiving the server's initialize response, what MUST the client send before any tool call is valid?"

options={[

"tools/list",

"notifications/initialized",

"initialize again",

"Nothing — the session is immediately live"

]}

correctIdx={1}

explanation="The MCP spec mandates that the client send notifications/initialized after processing the server's initialize response. This notification signals that the client is ready to proceed. Sending any other request — including tools/list or tools/call — before dispatching this notification is a protocol violation. The notification has no id because no response is expected."

/>

A client has just received the server's initialize response containing the server's capabilities. What is the NEXT message the client MUST send, and why? What happens if it skips this step and sends t…

Show expected output

The client must send 'notifications/initialized' — a JSON-RPC notification (no id) with method='notifications/initialized' and empty params. This signals to the server that the client has processed the initialize response and the session is live. If the client skips this and sends tools/list directly, it violates the MCP handshake protocol. Spec-compliant servers should treat any non-handshake request before notifications/initialized as a protocol error, potentially returning -32600 (Invalid Request) or closing the connection.

Reading a real MCP exchange

Let's trace a complete tool call from wire to result. The scenario: a user in Claude Desktop asks "What are my open GitHub PRs?" and the host invokes the list_pull_requests tool on a GitHub MCP server.

Step 1: Host discovers available tools.

```json → {"jsonrpc":"2.0","id":2,"method":"tools/list","params":{}}

← { "jsonrpc":"2.0", "id":2, "result":{ "tools":[ { "name":"list_pull_requests", "description":"List open pull requests for a repository", "inputSchema":{ "type":"object", "properties":{ "owner":{"type":"string","description":"GitHub org or username"}, "repo":{"type":"string","description":"Repository name"}, "state":{"type":"string","enum":["open","closed","all"],"default":"open"} }, "required":["owner","repo"] } } ] } } ```

The inputSchema is a JSON Schema object[3]. This is what the host passes to the model to describe the tool's calling convention. The model uses this schema to decide what arguments to generate.

Step 2: Model decides to call the tool. Host sends tools/call.

``json

→ {

"jsonrpc":"2.0",

"id":3,

"method":"tools/call",

"params":{

"name":"list_pull_requests",

"arguments":{

"owner":"anthropics",

"repo":"anthropic-sdk-python",

"state":"open"

}

}

}

``

Step 3: Server calls the GitHub API, returns the result.

``json

← {

"jsonrpc":"2.0",

"id":3,

"result":{

"content":[

{

"type":"text",

"text":"Found 12 open PRs:\n1. #847 — Add streaming support for tool_use...\n2. #821 — Fix retry logic on 529..."

}

],

"isError":false

}

}

``

Step 4: If the tool failed (GitHub API down, bad credentials):

``json

← {

"jsonrpc":"2.0",

"id":3,

"result":{

"content":[

{

"type":"text",

"text":"GitHub API returned 401: Bad credentials. Check your GITHUB_TOKEN environment variable."

}

],

"isError":true

}

}

``

Note: tool errors use isError: true in the result, NOT the JSON-RPC error field. The JSON-RPC error field is reserved for protocol errors (malformed request, unknown method). Separating protocol errors from tool errors is a deliberate design choice: a protocol error means the server couldn't understand the request; a tool error means the server understood the request but execution failed. The host handles these differently.

I'm reading MCP server logs and I see this message arrive on stdin: {\

Show expected output

The expert covers: (1) validate jsonrpc='2.0', id exists, method='tools/call', params.name matches a known tool, params.arguments validates against the tool's inputSchema — if any fail, return a JSON-RPC error with code -32602. (2) result.content array with text content and isError:false. (3) result.content with the error message and isError:true — NOT a JSON-RPC error object, because the protocol worked fine; only the tool execution failed.

HTTP Streaming transport: when stdio isn't enough

Stdio is perfect for local servers. But you can't run a stdio process when the MCP server is a cloud API — when the server is a SaaS vendor's integration, a company-wide shared endpoint, or a multi-tenant service.

The MCP spec defines an HTTP Streaming transport (formerly called HTTP+SSE; the 2025 spec revision renamed and updated it)[2] for remote servers. The key differences:

Connection model: Instead of a subprocess pipe, the client makes HTTP POST requests to a single endpoint. The server can respond with a streaming body (using chunked transfer encoding) to push multiple messages in one HTTP response.

Message format: Same JSON-RPC 2.0 envelope. Same newline delimiting. The wire format is identical; only the carrier changes.

Bidirectionality: Because HTTP is inherently request-initiated, server-to-client notifications can't be sent spontaneously. The spec handles this with Server-Sent Events (SSE) on a separate /events endpoint, or in the updated Streamable HTTP transport, by allowing the server to include multiple JSON-RPC messages in a single streaming response body.

Session management: HTTP is stateless. The client includes a session identifier in each request header so the server can correlate requests to the same logical session.

Auth: HTTP headers carry auth credentials (Bearer tokens, DPoP proofs — covered in Chapter 4). Stdio has no native auth mechanism; security relies on process-level isolation instead.

The choice between transports is architectural:

| Scenario | Transport | |---|---| | Local tool running on developer's machine | stdio | | Internal service running in Kubernetes | HTTP Streaming | | SaaS vendor's integration endpoint | HTTP Streaming | | CI/CD pipeline action | stdio (subprocess) | | Multi-tenant shared MCP server | HTTP Streaming | | Desktop IDE plugin | stdio |

A team is building a SaaS vendor integration that will serve thousands of tenants, each making concurrent MCP requests. Which MCP transport should they choose, and what are the three main protocol dif…

Show expected output

The team should choose HTTP Streaming transport. Three key differences vs stdio: (1) Session management — HTTP is stateless, so each request must include a session identifier in request headers so the server can correlate requests to the same logical session; (2) Server-push mechanism — stdio lets the server write to stdout at any time, but HTTP requires Server-Sent Events (SSE) on a /events endpoint or a streaming response body for server-to-client notifications; (3) Authentication — HTTP headers carry Bearer tokens or DPoP proofs for per-request auth, while stdio relies on OS process isolation with no native auth mechanism.

Hands-on exercise: a 60-line MCP server, no SDK

This is the most important exercise in the course. You're going to write an MCP server from scratch, in Python, using only sys.stdin and sys.stdout. No mcp package. This forces you to confront every design decision we've discussed.

What the server will do: Handle tools/list (returns one tool: echo) and tools/call for the echo tool (returns whatever string argument was passed). This is the low-level equivalent of the patterns you'll find in the official Python SDK[5], implemented without any abstraction so every design choice is visible.

Complete implementation:

```python #!/usr/bin/env python3 """ Minimal MCP server over stdio. No SDK. Raw JSON-RPC 2.0. Run: python server.py Test: echo '{"jsonrpc":"2.0","id":0,"method":"initialize","params":{"protocolVersion":"2025-03-26","capabilities":{},"clientInfo":{"name":"test","version":"1.0"}}}' | python server.py """ import sys import json

PROTOCOL_VERSION = "2025-03-26"

TOOLS = [ { "name": "echo", "description": "Returns the input string unchanged. Useful for testing.", "inputSchema": { "type": "object", "properties": { "message": {"type": "string", "description": "Text to echo back"} }, "required": ["message"] } } ]

def send(obj: dict) -> None: """Write a JSON-RPC message to stdout. stderr only for logs.""" line = json.dumps(obj, separators=(",", ":")) sys.stdout.write(line + "\n") sys.stdout.flush()

def error_response(req_id, code: int, message: str) -> dict: return {"jsonrpc": "2.0", "id": req_id, "error": {"code": code, "message": message}}

def handle(msg: dict) -> None: method = msg.get("method") req_id = msg.get("id") # None for notifications params = msg.get("params", {})

if method == "initialize": send({ "jsonrpc": "2.0", "id": req_id, "result": { "protocolVersion": PROTOCOL_VERSION, "capabilities": {"tools": {}}, "serverInfo": {"name": "echo-server", "version": "0.1.0"} } }) # The server stops here. The CLIENT then sends notifications/initialized # to signal readiness — never the other way around.

elif method == "tools/list": send({"jsonrpc": "2.0", "id": req_id, "result": {"tools": TOOLS}})

elif method == "tools/call": tool_name = params.get("name") args = params.get("arguments", {}) if tool_name == "echo": message = args.get("message", "") send({ "jsonrpc": "2.0", "id": req_id, "result": {"content": [{"type": "text", "text": message}], "isError": False} }) else: send(error_response(req_id, -32601, f"Unknown tool: {tool_name}"))

elif req_id is not None: # Unknown method with an id: return method-not-found error send(error_response(req_id, -32601, f"Method not found: {method}")) # Unknown notifications are silently ignored (no id, no response expected)

def main(): print("echo-server starting", file=sys.stderr) for line in sys.stdin: line = line.strip() if not line: continue try: msg = json.loads(line) except json.JSONDecodeError as e: send(error_response(None, -32700, f"Parse error: {e}")) continue handle(msg)

if __name__ == "__main__": main() ```

Testing it from the terminal (sequence matters — you need the initialize handshake first):

``bash

# Save as echo_server.py, then:

python3 echo_server.py << 'EOF'

{"jsonrpc":"2.0","id":0,"method":"initialize","params":{"protocolVersion":"2025-03-26","capabilities":{},"clientInfo":{"name":"test","version":"1.0"}}}

{"jsonrpc":"2.0","method":"notifications/initialized","params":{}}

{"jsonrpc":"2.0","id":1,"method":"tools/list","params":{}}

{"jsonrpc":"2.0","id":2,"method":"tools/call","params":{"name":"echo","arguments":{"message":"hello MCP"}}}

EOF

``

Expected output (one JSON object per line on stdout):

``json

{"jsonrpc":"2.0","id":0,"result":{"protocolVersion":"2025-03-26","capabilities":{"tools":{}},"serverInfo":{"name":"echo-server","version":"0.1.0"}}}

{"jsonrpc":"2.0","id":1,"result":{"tools":[{"name":"echo","description":"Returns the input string unchanged. Useful for testing.","inputSchema":{"type":"object","properties":{"message":{"type":"string","description":"Text to echo back"}},"required":["message"]}}]}}

{"jsonrpc":"2.0","id":2,"result":{"content":[{"type":"text","text":"hello MCP"}],"isError":false}}

``

What to verify:

- Every response has the same id as its corresponding request

- The client's notifications/initialized (line 2 of the input) produces no server output — it's a notification with no id, so the server correctly produces no response

- stderr shows "echo-server starting" (logs stay off stdout)

- A call to an unknown tool returns a JSON-RPC error object, not isError:true in result

Estimated time: 20 minutes to type/copy, run, and understand the output.

Review this Python MCP server implementation and identify three specific improvements that would make it production-ready. Focus on: error handling edge cases, logging, and correctness of the JSON-RPC…

Show expected output

The reviewer identifies: (1) No handling for stdin closing (EOF) — the for loop exits silently when stdin closes; a production server should log the shutdown and call sys.exit(0) for clean process termination. (2) No validation that the 'jsonrpc' field equals '2.0' — a malformed client could send a JSON object that passes json.loads but is not a valid JSON-RPC envelope, and the server would attempt to process it. (3) No handshake state machine — the server accepts tools/call or tools/list before the initialize handshake completes, violating the MCP spec's sequencing requirement; a robust implementation should track whether notifications/initialized has been received and return -32600 for premature requests.

<KnowledgeCheck question="Your MCP server is crashing but you see no error in the client. You add print() statements to debug. After adding them, the client starts receiving parse errors on every response. What is the most likely cause?" options={[ "The JSON encoder is broken by the print() call", "print() writes to stdout by default, corrupting the JSON-RPC message stream with debug text", "The client is too strict about whitespace in JSON", "print() adds a BOM (byte order mark) that breaks JSON parsing" ]} correctIdx={1} explanation="This is the single most common MCP debugging mistake. print() in Python writes to stdout by default. Since stdout is the protocol channel, any non-JSON text (including debug prints) will corrupt the message stream and cause the client to get parse errors. Always use print(..., file=sys.stderr) or a logging handler configured to write to stderr or a file. Never write anything to stdout except valid JSON-RPC messages." />

Error codes reference

JSON-RPC 2.0 defines standard error codes[1] that MCP uses for protocol-level errors:

| Code | Name | When to use | |---|---|---| | -32700 | Parse error | Invalid JSON received | | -32600 | Invalid Request | Valid JSON but not a valid JSON-RPC message | | -32601 | Method not found | Unknown method name | | -32602 | Invalid params | Method exists but params are wrong type/missing required | | -32603 | Internal error | Server-side bug during processing |

MCP-specific error codes start at -32000 and go down. Application-specific errors (your tool's domain errors) go in result.isError:true, not in the error field.

What's next

In Chapter 3, we zoom out from the wire protocol to the three semantic primitives: Tools, Resources, and Prompts. You now understand how any MCP message travels from client to server and back. Chapter 3 teaches you what to put in those messages — specifically, which primitive is the right abstraction for any given integration requirement. This is where most developers make wrong choices that are expensive to fix later.

References cited

[1]: JSON-RPC 2.0 Specification — Defines the message envelope format, error codes, notification semantics, and batch requests that MCP uses verbatim.

[2]: MCP Transports Specification — Defines stdio transport (newline-delimited JSON over subprocess pipes) and HTTP Streaming transport for remote servers.

[3]: JSON Schema Specification — The inputSchema field in MCP tool definitions is a JSON Schema object. Familiarity with draft 2020-12 is useful for writing precise tool definitions.

[4]: MCP Core Specification (2025-03-26) — The authoritative specification for the Model Context Protocol, covering the full message lifecycle, capability negotiation, and the initialize handshake sequencing requirements. URLs verified 200 OK as of 2026-04-30.

[5]: MCP Python SDK — The official Python SDK for MCP servers and clients; the mcp package this chapter intentionally avoids to make the raw wire protocol visible.

- MCP 2026 Roadmap — Transport Evolution — The roadmap priority on HTTP streaming and

.well-knownmetadata directly affects how remote MCP servers are discovered and connected.

References

Tools, Resources, Prompts — the three primitives and the decision rule

> Prerequisites: Chapter 1 (the N×M problem and host/client/server triad) and Chapter 2 (the wire protocol). You should have the echo-server from Chapter 2 working. > > Time: 40 minutes > > What you'll be able to do: By the end of this chapter, you can classify any integration requirement as Tool, Resource, or Prompt without hesitation, understand the protocol messages for each, and implement the Resources primitive with URI templating. This decision fluency is what separates an MCP server that's easy to maintain from one that gradually becomes an incoherent mess.

The mistake almost every developer makes

Ask a developer to build an MCP server for their internal knowledge base. Nine out of ten will build a Tool called search_docs that takes a query string and returns matching documents. Fast to write. Reasonable API. Completely wrong primitive.

The knowledge base is read-only data the model should be able to access, not a query the model should execute. The correct primitive is a Resource — possibly many Resources, one per document, with a URI scheme like docs://handbook/engineering/onboarding. The model reads resources; the host decides which resources to surface. Conflating these means you're burning tokens on tool invocations when you could be injecting context directly, and you're losing the host's ability to pre-load commonly-needed documents before the model even asks.

Understanding the three primitives at the level of their design intent — not just their API shape — is the difference between an MCP server that works and one that works well.

Tools — what the model executes

One-sentence definition: A Tool is an operation the model initiates that may have side effects and returns a result.

The keyword is may have side effects. Tools are the only MCP primitive where mutation is expected and permitted. Creating a Jira ticket, running a SQL query, sending a Slack message, executing a shell command — all of these are Tools because they change something outside the conversation.

Wire format

Tools are declared via tools/list and invoked via tools/call. You've seen both in Chapter 2. The critical field is inputSchema — a JSON Schema object that the host passes to the model to describe what arguments the tool accepts.[1]

A well-designed inputSchema is worth spending time on. It directly shapes the quality of model-generated tool calls:

``json

{

"name": "create_github_issue",

"description": "Create a new issue in a GitHub repository. Use when the user explicitly asks to create or file a bug report or feature request.",

"inputSchema": {

"type": "object",

"properties": {

"owner": {

"type": "string",

"description": "GitHub org or username that owns the repository"

},

"repo": {

"type": "string",

"description": "Repository name (without the owner prefix)"

},

"title": {

"type": "string",

"description": "Issue title. Should be concise and descriptive (under 80 characters)."

},

"body": {

"type": "string",

"description": "Issue body in GitHub-flavored Markdown. Include: description, steps to reproduce (if bug), expected vs actual behavior."

},

"labels": {

"type": "array",

"items": { "type": "string" },

"description": "Label names to apply. Must match existing labels in the repo.",

"default": []

}

},

"required": ["owner", "repo", "title"]

}

}

``

Here is a poorly-written `inputSchema` for a `send_slack_message` tool. Identify 3 specific problems and rewrite it.\n\n```json\n{\n \

Show expected output

A good answer identifies: (1) Description is too vague — 'Send a message' gives the model no signal about when to call it, which platform, or how channel should be formatted (Slack channel ID like C01234567 vs display name). (2) No required fields — both channel and message should be required; the model could omit them and produce an invalid call. (3) No property descriptions — the model doesn't know the channel must be a Slack channel ID, or that message supports Slack markdown, or that urgent defaults to false. The rewrite should add a useful tool description with a usage trigger, mark channel/message as required, add property descriptions with format hints, and add a default of false for urgent.

Three principles for good tool schemas:

1. The `description` field is a prompt. The model reads it to decide when and how to call the tool. Vague descriptions produce vague calls.

2. Required vs optional matters. Put the minimum viable set in required. Optional fields with sensible defaults make the model's job easier.

3. Enum constraints reduce hallucination. If a field has a fixed set of valid values, use "enum": [...]. The model is more likely to pass valid values when it knows the allowed set.

Control flow: model-initiated

This is the distinguishing property. The model decides when to call a tool, whether to call it at all, and what arguments to pass. The host can show the model a list of available tools and can execute tool calls on the model's behalf, but it cannot force the model to use a specific tool or prevent the model from calling a tool it's already seen.

This means Tools have a different security posture than Resources: any user who can talk to the model can potentially trigger any Tool in the server's list. Chapter 4 covers how OAuth scopes and gateway RBAC address this.

Resources — what the model reads

One-sentence definition: A Resource is read-only data identified by a URI that either the model or the application can inject into context.

The "either the model or the application" part is why Resources exist as a separate primitive. With Tools, only the model initiates. With Resources, the host application can also proactively inject resource content into context without the model asking. Claude Desktop can decide to load the contents of file:///Users/alice/project/README.md into context before the conversation starts. The model never had to "call" anything; the host made an editorial decision about what context to provide.

Wire format

Resources are listed via resources/list and read via resources/read.[2] Each resource has a URI and a MIME type:

``json

{

"jsonrpc": "2.0",

"id": 4,

"result": {

"resources": [

{

"uri": "docs://handbook/engineering/onboarding",

"name": "Engineering Onboarding Guide",

"description": "Step-by-step guide for new engineers joining the team",

"mimeType": "text/markdown"

},

{

"uri": "docs://handbook/engineering/incident-response",

"name": "Incident Response Runbook",

"mimeType": "text/markdown"

}

]

}

}

``

To read a resource:

```json → {"jsonrpc":"2.0","id":5,"method":"resources/read","params":{"uri":"docs://handbook/engineering/onboarding"}}

← { "jsonrpc":"2.0", "id":5, "result":{ "contents":[ { "uri":"docs://handbook/engineering/onboarding", "mimeType":"text/markdown", "text":"# Engineering Onboarding Guide\n\n## Week 1\n..." } ] } } ```

The content can be text (for text/markdown, text/plain, application/json) or blob (base64-encoded binary, for images, PDFs, etc.).[2]

URI templating: the power feature

Static URIs are fine for a fixed set of resources. Real-world resources are dynamic: files in a repo, rows in a database, objects in S3. For these, MCP supports resource templates — URI patterns with variable placeholders:[2]

``json

{

"uriTemplate": "github://{owner}/{repo}/blob/{branch}/{path}",

"name": "GitHub file content",

"description": "Read the content of any file in any GitHub repository at any branch",

"mimeType": "text/plain"

}

``

The {owner}, {repo}, {branch}, and {path} placeholders follow RFC 6570 URI Template syntax.[4] The host expands the template with actual values (either model-generated or app-provided) and sends the expanded URI in resources/read.

This enables a single resource template to represent billions of concrete resources — every file, in every repo, at every branch — without the server having to enumerate them.

Resource subscriptions

For resources that change (a live config file, a database view, real-time metrics), the server can support subscriptions. The client sends resources/subscribe with a URI; the server sends notifications/resources/updated when the content changes. The client then re-reads the resource.

This is the pull-on-push pattern: notifications tell you something changed; you fetch the new content yourself. The server never pushes large payloads proactively.

I'm building an MCP server for a company's internal documentation system (Confluence-like). The system has: 10,000 pages, 500 spaces, real-time page updates when editors save. Design the Resources sch…

Show expected output

A good answer: (1) URI scheme like docs://{space}/{page-id} or docs://{space}/{page-slug} — page-slug is human-readable but can have conflicts; page-id is stable. (2) Resource templates for individual pages (can't enumerate 10k pages statically), plus static resources for space/index pages. (3) Yes subscriptions — editors saving pages should push updates to clients that have loaded the page into context; otherwise stale content is injected. The answer should also flag that 10k pages is too many for a full static list — pagination via cursor is needed.Prompts — what the user selects

One-sentence definition: A Prompt is a user-initiated, parameterised message template that the host exposes as a selectable option in its UI.

This is the least-understood primitive. Many developers skip Prompts entirely because they look optional — and they're wrong.

The key distinction: Tools are model-initiated; Prompts are user-initiated. The user looks at a menu of available Prompts in their host application, selects one (like selecting a Slash Command), fills in the arguments, and the host renders the Prompt template into a message that kicks off the conversation.

Wire format

Prompts are listed via prompts/list and fetched via prompts/get:[3]

``json

{

"prompts": [

{

"name": "code_review",

"description": "Generate a thorough code review for a pull request",

"arguments": [

{

"name": "pr_url",

"description": "GitHub pull request URL",

"required": true

},

{

"name": "focus",

"description": "What to focus on: security, performance, style, or all",

"required": false

}

]

}

]

}

``

When the user selects this prompt and provides arguments, the host sends prompts/get:

```json → { "jsonrpc":"2.0","id":6,"method":"prompts/get", "params":{ "name":"code_review", "arguments":{ "pr_url":"https://github.com/anthropics/sdk-python/pull/847", "focus":"security" } } }

← { "jsonrpc":"2.0","id":6, "result":{ "description":"Code review prompt for PR #847", "messages":[ { "role":"user", "content":{ "type":"text", "text":"Please review this pull request with a focus on security vulnerabilities:\n\nhttps://github.com/anthropics/sdk-python/pull/847\n\nFor each issue found:\n1. Describe the vulnerability\n2. Assess the severity (critical/high/medium/low)\n3. Suggest a specific fix\n4. Note any positive security practices you observe" } } ] } } ```

The server returns rendered messages — the actual text that will be injected into the conversation. The Prompt is the template; prompts/get with arguments is the render step.

Design a `prompts/list` response for a customer-support MCP server. Then show the `prompts/get` response for one of those prompts with realistic arguments filled in.

Show expected output

A good answer: prompts/list should have 2-4 prompts such as 'draft_reply' (arguments: ticket_id, tone), 'summarise_ticket' (arguments: ticket_id), 'escalation_template' (arguments: ticket_id, reason). The prompts/get response should show a realistic messages array — the actual rendered text with argument values substituted in — not just a template string. The rendered message should be something a support agent would actually send, with the ticket context embedded.

When Prompts beat system prompts

Prompts have a structural advantage over static system prompt text: they're discoverable, named, and parameterised at runtime. A host application can show users a searchable library of available Prompts across all connected MCP servers. This is the equivalent of Slash Commands in Slack or Linear — a user interface for structured intent.

Use a Prompt when: - The task is user-initiated and repeatable (code review, draft an email, analyse a document) - The task has well-known arguments that vary per invocation - You want the task to appear as a named, discoverable option in the host UI

<KnowledgeCheck question="A developer wants users to be able to generate a weekly status report by filling in 'team name' and 'date range'. Should this be a Tool, Resource, or Prompt?" options={[ "Tool — the model decides when to generate it", "Resource — the report data is read-only", "Prompt — user-initiated, parameterised, repeatable template", "None of the above" ]} correctIdx={2} explanation="Prompts are the right primitive for user-initiated, parameterised, repeatable tasks. The user selects 'Weekly Status Report', fills in team and date range, and the host renders the template. A Tool would put the model in charge of initiating it. A Resource is for data the host injects, not conversation templates." />

The decision rule

Every integration requirement can be classified with three questions:

Who initiates? - Model decides autonomously → Tool - User selects from a menu → Prompt - App pre-loads into context (or model requests by URI) → Resource

What does it do? - May write, create, delete, or trigger side effects → Tool (not Resource, never Prompt) - Reads data, produces output → could be Tool or Resource; continue to next question - Provides a structured conversation template → Prompt

Who should control the access policy? - App/platform makes a policy decision about what data is available → Resource - Model decides dynamically based on conversation context → Tool

As a quick-reference table:

| Requirement | Primitive | Why |

|---|---|---|

| Search internal Slack messages | Tool | Model-initiated, returns results on demand |

| Current on-call schedule (read-only, changes daily) | Resource | App can pre-load; model reads by URI; no side effects |

| Create a Jira ticket | Tool | Side effects (creates an object); model-initiated |

| "Draft incident post-mortem" template | Prompt | User-selected; parameterised by incident ID |

| List all employees in HR system | Resource (template) | Read-only data; URI scheme hr://employees |

| Provision a cloud VM | Tool | Irreversible side effect; model-initiated with confirmation |

| Coding style guidelines document | Resource | Static content; app pre-loads into context |

| "Explain this error" workflow | Prompt | User-initiated; arguments: error message, language |

A team wants to add 'get recent alerts from PagerDuty (last 24 hours)' to their MCP server. Apply the three-question decision rule and classify it as Tool, Resource, or Prompt. Justify each answer.

Show expected output

A good answer works through all three questions: (1) Who initiates? — This is ambiguous; could be model-initiated on demand (Tool) or app-pre-loaded (Resource). The 'last 24 hours' filter makes it dynamic. (2) What does it do? — Read-only, no side effects — could be Tool or Resource. (3) Who controls access policy? — The on-call team's tooling (app) decides what alert data is available; the model doesn't need to decide dynamically. Best classification: Resource with a URI like pagerduty://alerts/recent and a subscription for live updates. The model or host can read it by URI. A Tool would be correct only if the query needs dynamic parameters (e.g. arbitrary time ranges the model decides on). The answer should flag the tradeoff between static Resource (simpler, cacheable) and dynamic Tool (flexible query).

<KnowledgeCheck question="Your team's MCP server has a tool called get_current_user_profile that takes no arguments and returns the authenticated user's profile JSON. It's called at the start of almost every conversation. What's wrong with this design and what's the better primitive?" options={[ "Nothing is wrong — a zero-argument Tool is valid MCP", "It should be a Resource at a URI like user://me/profile, so the host can pre-load it without a tool call", "It should be a Prompt that renders the profile into a system message", "Tools must have at least one argument; zero-argument tools are invalid MCP" ]} correctIdx={1} explanation="A zero-argument Tool that returns static (per-session) data is a Resource in disguise. Every time the model calls this Tool, it's spending an LLM inference turn and a round-trip to the server just to fetch data that the host could have pre-loaded. The correct design is a Resource at a URI like user://me/profile. The host loads it at session start and injects it into context. The model never has to 'ask' for it." />

Hands-on exercise: classify and implement a GitHub integration

Part 1 — Classification (10 min)

Given this GitHub integration requirements list, classify each as Tool, Resource, or Prompt with one sentence of justification:

- List all open pull requests for a repository

- Read the content of any file at any commit SHA

- Create a new pull request

- Get the authenticated user's GitHub profile (read-only, stable per session)

- Search code across all repos by keyword

- "Write a release announcement" template that takes version number and changelog

- Get the CI/CD status for a specific commit SHA

- Subscribe to PR review notifications

Reference answers:

1. Tool — model-initiated, read-only but dynamic (PRs change constantly); needs to be on-demand

2. Resource — URI template github://{owner}/{repo}/blob/{sha}/{path}, read-only, model or app can load by URI

3. Tool — creates a new object (side effect), model-initiated with arguments

4. Resource — URI github://user/profile, stable per session, host pre-loads

5. Tool — model-initiated search across a dynamic corpus; results change; side-effect-free but dynamic

6. Prompt — user-selected template, arguments: version and changelog text

7. Resource — URI github://{owner}/{repo}/commit/{sha}/status, read-only status object

8. (Trick question) — notification subscriptions are not a primitive; they're a transport feature of Resources that support subscribe. This is a Resource with subscription enabled.

Part 2 — Implement the Resources endpoint (10 min)

Add a resources/list and resources/read handler to the echo server from Chapter 2. Implement the GitHub file resource with URI templating.

```python import re

def handle_resource_read(uri: str, req_id) -> None: m = GITHUB_FILE_RE.match(uri) if not m: send(error_response(req_id, -32602, f"Unsupported resource URI: {uri}")) return owner, repo, branch, path = m.group("owner"), m.group("repo"), m.group("branch"), m.group("path") # In a real server: fetch from GitHub API with auth # For this exercise: return a simulated response content = f"# Simulated content\nowner={owner}, repo={repo}, branch={branch}, path={path}" send({ "jsonrpc": "2.0", "id": req_id, "result": { "contents": [{"uri": uri, "mimeType": "text/plain", "text": content}] } })

Verification: Test with:

``bash

echo '{"jsonrpc":"2.0","id":5,"method":"resources/read","params":{"uri":"github://anthropics/sdk-python/blob/main/README.md"}}' | python3 echo_server.py

``

Confirm you get a valid result.contents response, not an error.

<KnowledgeCheck question="In your own words: describe a real integration from your work (or a tool you use daily) where the wrong choice between Tool and Resource would cause a measurable quality problem — either wasted tokens, stale context, or missed capability. Be specific about what the right choice is and why." options={["self-check"]} correctIdx={0} explanation="Strong answers are concrete and specific. Example of a strong answer: 'Our team's MCP server has a tool called get_company_policy that returns a 3000-word document. Every conversation starts with the model calling it. Using Tool instead of Resource means: (1) a tool call round-trip at the start of every conversation (~1 second latency), (2) the model has to explicitly decide to call it rather than the host injecting it. Changing to Resource lets the host pre-load it; the model doesn't waste a turn fetching context it always needs.'" />

What's next